AI-based language evaluation has lately gone by way of a “paradigm shift” (Bommasani et al., 2021, p. 1), thanks partly to a brand new approach known as transformer language mannequin (Vaswani et al., 2017, Liu et al., 2019). Corporations, together with Google, Meta, and OpenAI have launched such fashions, together with BERT, RoBERTa, and GPT, which have achieved unprecedented giant enhancements throughout most language duties reminiscent of net search and sentiment evaluation. Whereas these language fashions are accessible in Python, and for typical AI duties by way of HuggingFace, the R bundle textual content makes HuggingFace and state-of-the-art transformer language fashions accessible as social scientific pipelines in R.

Introduction

We developed the textual content bundle (Kjell, Giorgi & Schwartz, 2022) with two goals in thoughts:

To function a modular answer for downloading and utilizing transformer language fashions. This, for instance, contains reworking textual content to phrase embeddings in addition to accessing frequent language mannequin duties reminiscent of textual content classification, sentiment evaluation, textual content technology, query answering, translation and so forth.

To supply an end-to-end answer that’s designed for human-level analyses together with pipelines for state-of-the-art AI strategies tailor-made for predicting traits of the person who produced the language or eliciting insights about linguistic correlates of psychological attributes.

This weblog publish exhibits methods to set up the textual content bundle, remodel textual content to state-of-the-art contextual phrase embeddings, use language evaluation duties in addition to visualize phrases in phrase embedding house.

Set up and organising a python surroundings

The textual content bundle is organising a python surroundings to get entry to the HuggingFace language fashions. The primary time after putting in the textual content bundle you’ll want to run two capabilities: textrpp_install() and textrpp_initialize().

# Set up textual content from CRAN

set up.packages("textual content")

library(textual content)

# Set up textual content required python packages in a conda surroundings (with defaults)

textrpp_install()

# Initialize the put in conda surroundings

# save_profile = TRUE saves the settings so that you just shouldn't have to run textrpp_initialize() once more after restarting R

textrpp_initialize(save_profile = TRUE)See the prolonged set up information for extra data.

Remodel textual content to phrase embeddings

The textEmbed() perform is used to rework textual content to phrase embeddings (numeric representations of textual content). The mannequin argument lets you set which language mannequin to make use of from HuggingFace; you probably have not used the mannequin earlier than, it’ll mechanically obtain the mannequin and obligatory recordsdata.

# Remodel the textual content knowledge to BERT phrase embeddings

# Notice: To run quicker, attempt one thing smaller: mannequin = 'distilroberta-base'.

word_embeddings <- textEmbed(texts = "Hey, how are you doing?",

mannequin = 'bert-base-uncased')

word_embeddings

remark(word_embeddings)The phrase embeddings can now be used for downstream duties reminiscent of coaching fashions to foretell associated numeric variables (e.g., see the textTrain() and textPredict() capabilities).

(To get token and particular person layers output see the textEmbedRawLayers() perform.)

There are a lot of transformer language fashions at HuggingFace that can be utilized for numerous language mannequin duties reminiscent of textual content classification, sentiment evaluation, textual content technology, query answering, translation and so forth. The textual content bundle includes user-friendly capabilities to entry these.

classifications <- textClassify("Hey, how are you doing?")

classifications

remark(classifications)generated_text <- textGeneration("The that means of life is")

generated_textFor extra examples of obtainable language mannequin duties, for instance, see textSum(), textQA(), textTranslate(), and textZeroShot() beneath Language Evaluation Duties.

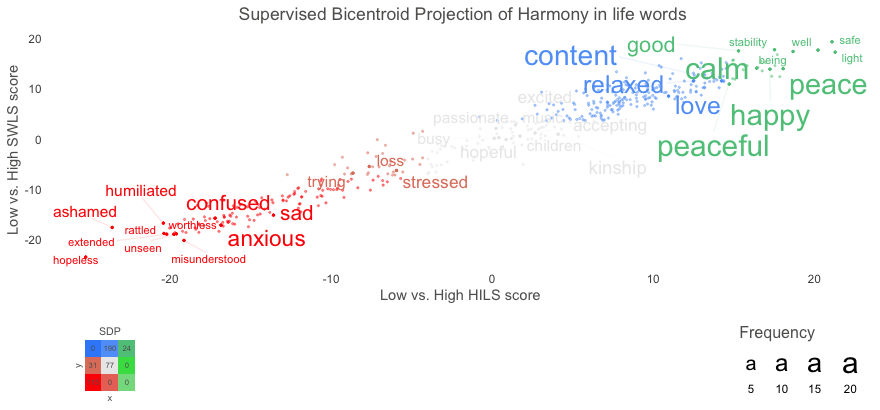

Visualizing phrases within the textual content bundle is achieved in two steps: First with a perform to pre-process the information, and second to plot the phrases together with adjusting visible traits reminiscent of colour and font dimension.

To exhibit these two capabilities we use instance knowledge included within the textual content bundle: Language_based_assessment_data_3_100. We present methods to create a two-dimensional determine with phrases that people have used to explain their concord in life, plotted in line with two completely different well-being questionnaires: the concord in life scale and the satisfaction with life scale. So, the x-axis exhibits phrases which can be associated to low versus excessive concord in life scale scores, and the y-axis exhibits phrases associated to low versus excessive satisfaction with life scale scores.

word_embeddings_bert <- textEmbed(Language_based_assessment_data_3_100,

aggregation_from_tokens_to_word_types = "imply",

keep_token_embeddings = FALSE)

# Pre-process the information for plotting

df_for_plotting <- textProjection(Language_based_assessment_data_3_100$harmonywords,

word_embeddings_bert$textual content$harmonywords,

word_embeddings_bert$word_types,

Language_based_assessment_data_3_100$hilstotal,

Language_based_assessment_data_3_100$swlstotal

)

# Plot the information

plot_projection <- textProjectionPlot(

word_data = df_for_plotting,

y_axes = TRUE,

p_alpha = 0.05,

title_top = "Supervised Bicentroid Projection of Concord in life phrases",

x_axes_label = "Low vs. Excessive HILS rating",

y_axes_label = "Low vs. Excessive SWLS rating",

p_adjust_method = "bonferroni",

points_without_words_size = 0.4,

points_without_words_alpha = 0.4

)

plot_projection$final_plotThis publish demonstrates methods to perform state-of-the-art textual content evaluation in R utilizing the textual content bundle. The bundle intends to make it simple to entry and use transformers language fashions from HuggingFace to research pure language. We stay up for your suggestions and contributions towards making such fashions obtainable for social scientific and different functions extra typical of R customers.

- Bommasani et al. (2021). On the alternatives and dangers of basis fashions.

- Kjell et al. (2022). The textual content bundle: An R-package for Analyzing and Visualizing Human Language Utilizing Pure Language Processing and Deep Studying.

- Liu et al (2019). Roberta: A robustly optimized bert pretraining method.

- Vaswaniet al (2017). Consideration is all you want. Advances in Neural Info Processing Methods, 5998–6008

Corrections

In the event you see errors or wish to counsel adjustments, please create a problem on the supply repository.

Reuse

Textual content and figures are licensed beneath Artistic Commons Attribution CC BY 4.0. Supply code is on the market at https://github.com/OscarKjell/ai-blog, until in any other case famous. The figures which have been reused from different sources do not fall beneath this license and might be acknowledged by a observe of their caption: “Determine from …”.

Quotation

For attribution, please cite this work as

Kjell, et al. (2022, Oct. 4). Posit AI Weblog: Introducing the textual content bundle. Retrieved from https://blogs.rstudio.com/tensorflow/posts/2022-09-29-r-text/

BibTeX quotation

@misc{kjell2022introducing,

writer = {Kjell, Oscar and Giorgi, Salvatore and Schwartz, H Andrew},

title = {Posit AI Weblog: Introducing the textual content bundle},

url = {https://blogs.rstudio.com/tensorflow/posts/2022-09-29-r-text/},

yr = {2022}

}