This publish was written with Lokesha Thimmegowda, Muppirala Venkata Krishna Kumar, and Maraka Vishwadev of CBRE.

CBRE is the world’s largest industrial actual property companies and funding agency. The corporate serves shoppers in additional than 100 international locations and affords companies starting from capital markets and leasing advisory to funding administration, mission administration and services administration.

CBRE makes use of AI to enhance industrial actual property options with superior analytics, automated workflows, and predictive insights. The possibility to unlock worth with AI within the industrial actual property lifecycle begins with information at scale. With the trade’s largest dataset and a complete suite of enterprise-grade know-how, the corporate has applied a spread of AI options to spice up particular person productiveness and assist broad-scale transformation.

This weblog publish describes how CBRE and AWS partnered to rework how property administration professionals entry data, making a next-generation search and digital assistant expertise that unifies entry throughout many sorts of property information utilizing Amazon Bedrock, Amazon OpenSearch Service, Amazon Relational Database Service, Amazon Elastic Container Service, and AWS Lambda.

Unified property administration search challenges

CBRE’s proprietary PULSE system consolidates a variety of important property information—protecting structured information from relational databases that file transactions and unstructured information saved in doc repositories containing all the pieces from lease agreements to property inspections. Previously, property administration professionals needed to sift by hundreds of thousands of paperwork and change between a number of totally different programs to find property upkeep particulars. Information was scattered throughout 10 distinct sources and 4 separate databases, which made it laborious to get full solutions. This fragmented setup lowered productiveness and made it tough to uncover key insights about property operations.

Consultants in property administration, not database syntax, wanted to ask advanced questions in pure language, rapidly synthesize disparate data, and keep away from handbook evaluation of prolonged paperwork.

The problem: ship an intuitive, unified search answer bridging structured and unstructured content material, with sturdy safety, enterprise-grade efficiency and reliability.

Resolution structure

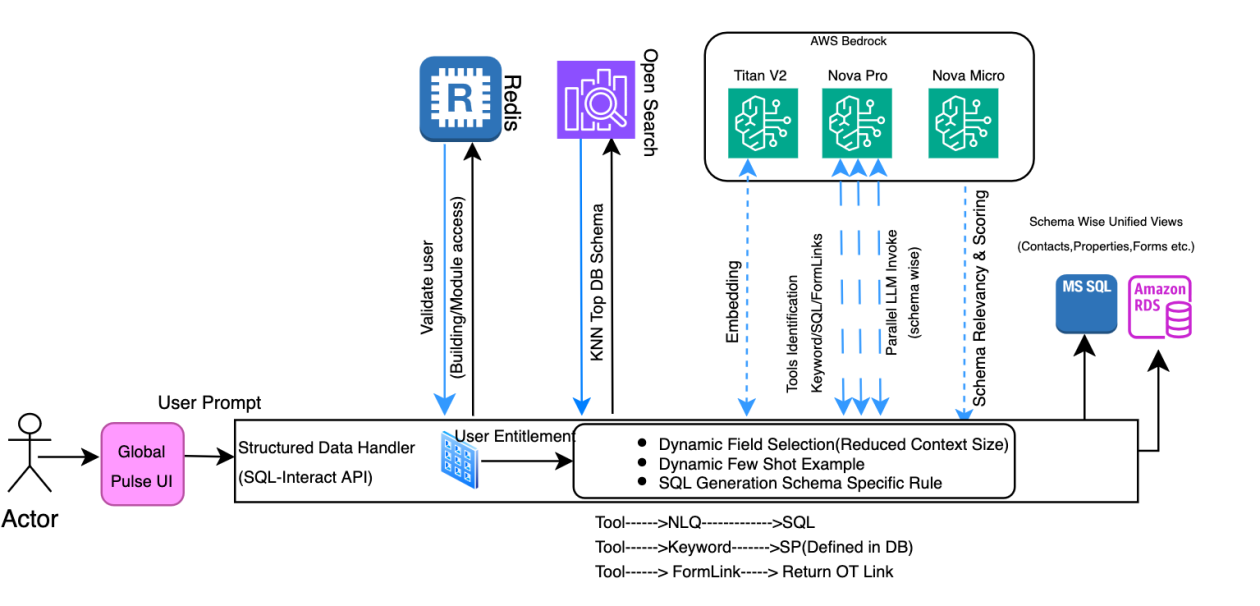

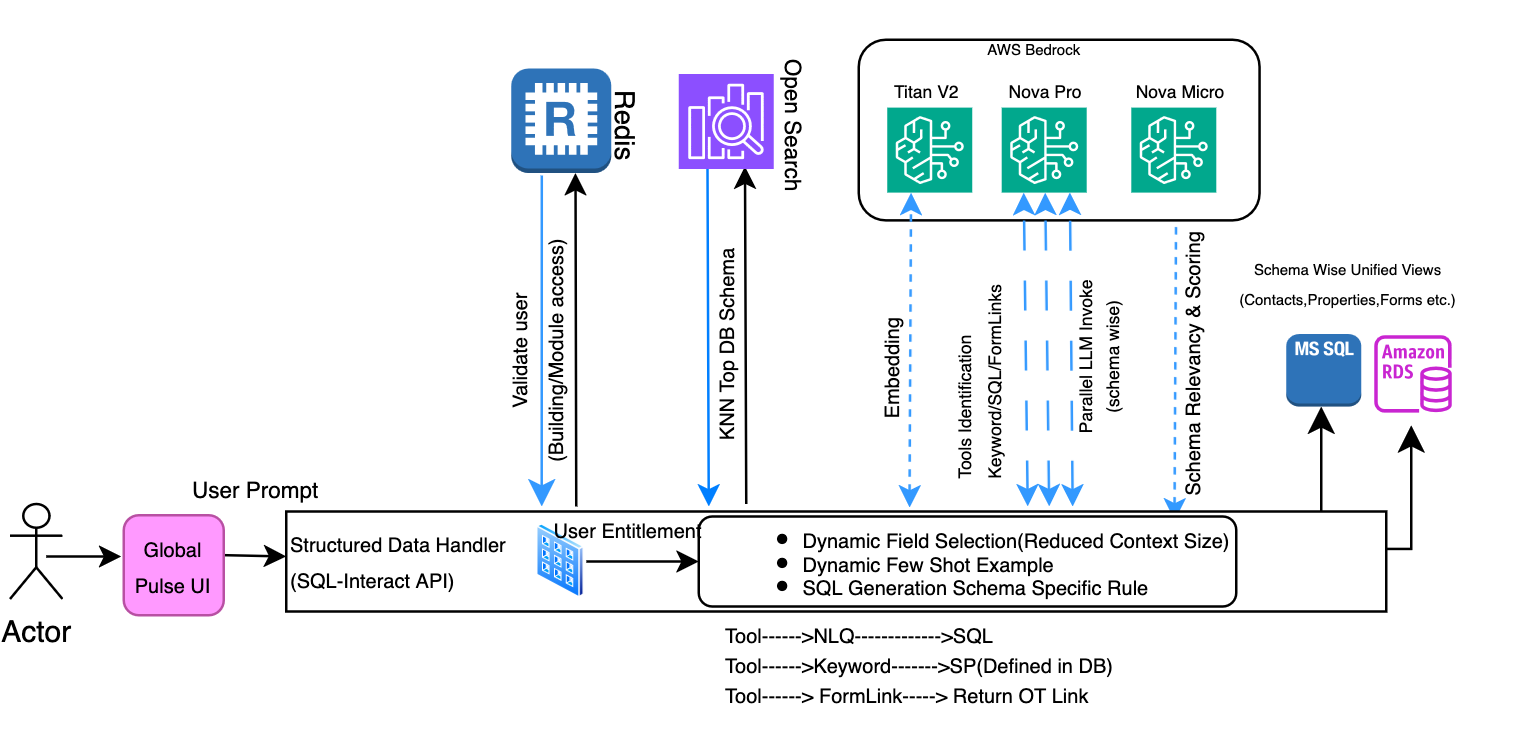

CBRE applied a worldwide search answer inside PULSE, powered by Amazon Bedrock, to handle these challenges. The search structure is designed for a seamless, clever, and safe data retrieval expertise throughout various information sorts. It orchestrates an interaction of consumer interplay, AI-driven processing, and sturdy information storage.

CBRE’s PULSE search answer makes use of Amazon Bedrock for the speedy deployment of generative AI capabilities through the use of a number of basis fashions by a single API. CBRE’s implementation makes use of Amazon Nova Professional for SQL question era, attaining a 67% discount in processing time, whereas Claude Haiku powers clever doc interactions. The answer maintains enterprise-grade safety for all property information. By combining Amazon Bedrock capabilities with Retrieval Augmented Era (RAG) and Amazon OpenSearch Service, CBRE created a unified search expertise throughout greater than eight million paperwork and a number of databases, basically reworking how property professionals entry and analyze business-critical data.

The next diagram illustrates the structure for the answer that CBRE applied in AWS:

Allow us to undergo the move for the answer:

- Property Supervisor and PULSE UI: Property managers work together by the intuitive PULSE consumer interface, which serves because the gateway for each conventional key phrase searches and pure language queries (NLQ). The UI shows search outcomes, helps doc conversations, and presents clever summaries in desktop and cell.

- Dynamic search execution: When customers submit requests, the system first retrieves user-specific permissions from Amazon ElastiCache for Redis, chosen for its low latency and excessive throughput. Search operations throughout Amazon OpenSearch and transactional databases are then constrained by these user-specific permissions, ensuring customers solely entry licensed outcomes with real-time granular management.

- Orchestration layer: This central management hub serves as the applying’s mind, receiving consumer requests from PULSE UI and intelligently routing them to acceptable backend companies. Key obligations embody:

- Routing queries to related information programs (structured databases, unstructured paperwork, or each for deep search).

- Initiating parallel searches throughout SQL Work together and Doc Work together elements.

- Merging, de-duplicating, and rating outcomes from disparate sources for unified outcomes.

- Managing dialog historical past by Amazon DynamoDB integration.

- SQL work together element (structured information search): This pathway manages interactions with structured relational databases (RDBMS) by these key steps:

- 4.1 Database metadata retrieval: Dynamically fetches schema particulars (for instance, desk names, column names, information sorts, relationships, constraints) for entities like property, contacts, and tenants from an Amazon OpenSearch index.

- 4.2 Amazon Bedrock LLM (Amazon Nova Professional): Interprets the consumer’s pure language question alongside schema metadata, translating it into correct, optimized SQL queries tailor-made to the database. The answer lowered SQL question era time from a mean of 12 seconds earlier to 4 seconds utilizing Amazon Nova Professional.

- 4.3 RDBMS programs (PostgreSQL, MS SQL): Precise transactional databases, akin to PostgreSQL and MS SQL, which home the core structured property administration information (for instance, properties, contacts, tenants, K2 kinds). They execute the LLM-generated SQL queries and return the structured tabular outcomes again to the SQL Work together element.

- DocInteract Element (Unstructured Doc Search): This pathway is particularly designed for clever search and interplay with unstructured paperwork.

- 5.1 Vector Retailer (OpenSearch Cluster): Shops paperwork, together with these from OpenText, as high-dimensional vectors for environment friendly semantic search utilizing methods like k-Nearest Neighbors whereas prioritizing velocity and accuracy with metadata filtering.

- 5.2 Amazon Bedrock LLM (Claude Haiku): Interprets NLQs and interprets them into optimized OpenSearch DSL queries, whereas powering the “Chat With AI” characteristic for direct doc interplay, producing concise, conversational responses together with solutions, summaries, and pure dialogue.

Having established the core structure with each SQL Work together and DocInteract elements, the next sections discover the particular optimizations and improvements applied for every information kind, starting with structured information search enhancements.

Structured information search

Constructing on the SQL work together element outlined within the structure, the PULSE Search utility affords two search strategies for accessing structured information in PostgreSQL and MS SQL. Key phrase Search scans the fields and schemas for particular phrases, facilitating complete protection of the complete information system. With Pure Language Question (NLQ) Search customers can work together with the databases utilizing on a regular basis language, translating queries into database queries. Each strategies assist property managers to effectively find and retrieve data throughout the database modules.

Database layer search efficiency enhancement on the SQL stage

Our distinctive problem concerned implementing application-wide key phrase searches that wanted to scan throughout the columns in database tables – a non-conventional requirement in comparison with conventional listed column-specific searches in RDBMS programs. This common search functionality was important for consumer expertise, permitting data discovery with out understanding particular column names or information buildings.

We leveraged native full-text search capabilities in each PostgreSQL and MS SQL Server databases:

- PostgreSQL Implementation:

- Microsoft SQL Server Implementation:

Observe: Our implementation makes use of specialised textual content search columns (textsearchable_all_col) concatenating the searchable fields from the view pd_db_view_name, whereas ms_db_view_name represents a view created with full-text search indexing.

This optimization delivered an 80% enchancment in question efficiency by harnessing native database capabilities whereas balancing complete search protection with optimum database efficiency by specialised indexing algorithms.

Database layer search efficiency enhancement on the SQL work together API stage

We applied a number of optimizations in database search performance focusing on three key performances (KPIs): Accuracy (precision of outcomes), Consistency (reproducible outcomes), and Relevancy (ensuring outcomes align with consumer intent). The enhancements lowered response latency whereas concurrently boosting these ACR metrics, leading to quicker and extra reliable search outcomes.

Immediate Engineering Modifications: We applied a complete method to immediate administration and optimization, specializing in the next components.

- Configurability: We applied modular immediate templates saved in exterior recordsdata to allow model management, simplified administration, and lowered immediate dimension, bettering efficiency and maintainability.

- Dynamic discipline choice for context window discount: The system makes use of KNN-based similarity search to filter and choose solely probably the most related schema fields aligned with consumer intent, decreasing context window dimension and optimizing immediate effectiveness.

- Dynamic few-shot instance: The system intelligently selects probably the most related few-shot instance from a configuration file utilizing KNN-based similarity seek for the SQL era. This sensible, context-aware method makes certain that solely probably the most pertinent instance is included within the immediate, minimizing pointless information overhead. This method helped in getting constant and correct SQL era from LLM.

- Enterprise rule integration: The system maintains a centralized repository of enterprise guidelines in a devoted schema sensible configuration file, making rule administration and updates streamlined and environment friendly. Throughout immediate era, related enterprise guidelines are dynamically built-in into prompts, facilitating consistency in rule utility whereas offering flexibility for updates and upkeep.

- LLM score-based relevancy: We added a fourth LLM name to judge and reorder schema relevance after preliminary KNN retrieval, addressing challenges the place vector search returned irrelevant or poorly ordered schemas.For instance, when processing a consumer question about property or contact data, the vector search would possibly return three schemas, however:

- The third schema is perhaps irrelevant to the question.

- The ordering of the 2 related schemas won’t mirror their true relevancy to the question.

To handle these challenges, we launched a further LLM processing (4th LLM parallel name) step that:

- Evaluates the relevance of every schema to the consumer question.

- Assigns relevancy scores to find out schema significance.

- Reorders schemas based mostly on their precise relevance to the question.

This enhancement improved our schema choice course of by:

- Ensuring solely actually related schemas are chosen.

- Sustaining correct relevancy ordering.

- Offering extra correct context for subsequent question processing.

These enhancements improved schema choice by verifying solely actually related schemas are processed, sustaining correct relevancy ordering, and offering extra correct context for question processing. The end result was extra exact, contextually acceptable responses and improved total utility efficiency.

Parallel LLM inference for SQL era with Amazon Nova Professional

We applied a complete parallel processing structure for NLQ to SQL conversion, enhancing system efficiency and effectivity. The answer introduces concurrent schema-based API calls to the LLM inference engine, with asynchronous processing for a number of schema evaluations. Our security-first method authenticates and validates consumer entitlements whereas performing context-aware schema identification that comes with similarity search and enforces entry permissions. The system solely processes schemas for which the consumer has specific authorization, facilitating foundational information safety. Following authentication, the system dynamically generates prompts (as detailed in our immediate engineering framework) and initiates concurrent processing of probably the most related schemas by parallel LLM inference calls. Earlier than execution, it enhances the generated SQL queries with obligatory safety joins that implement building-level entry controls, proscribing customers to their licensed buildings solely.

Finalized SQL queries are executed on respective database programs (PostgreSQL or SQL Server). The system processes the question outcomes and returns them as a structured API response, sustaining safety and information integrity all through the complete workflow. This structure facilitates each optimum efficiency by parallel processing and complete safety by multi-layered entry controls.

This built-in method incorporates concurrent validation of generated SQL queries, leading to lowered processing time and improved system throughput and lowered inference latency with Amazon Nova Professional. With introduction of Nova Professional there was vital enchancment in inference latency. The framework’s structure facilitates environment friendly useful resource utilization whereas sustaining excessive accuracy in SQL question era, making it significantly efficient for dealing with advanced database operations and high-volume question processing necessities.

Enhancing unstructured information search

The PULSE doc search makes use of two predominant strategies, enhanced by purpose-built specialised search capabilities. Customers can use the streamlined Key phrase Search to exactly find phrases inside paperwork and metadata for quick retrieval when exact search phrases are identified. This simple method makes certain customers can rapidly find actual matches throughout the complete doc panorama. The second methodology, Pure Language Question (NLQ) Search, helps interplay with paperwork utilizing on a regular basis language, deciphering intent and changing queries into search parameters—significantly highly effective for advanced or idea -based queries. Complementing these core search strategies, the system affords specialised search capabilities together with Favorites and Collections search so customers can effectively navigate their personally curated doc units and shared collections. Moreover, the system gives clever doc add search performance that helps customers rapidly find acceptable doc classes and add areas based mostly on doc sorts and property contexts.

The search infrastructure helps complete file codecs together with PDFs, Microsoft Workplace paperwork (Phrase, Excel, PowerPoint), emails (MSG), pictures (JPG, PNG), textual content recordsdata, HTML recordsdata, and numerous different doc sorts, facilitating complete protection throughout the doc classes within the property administration atmosphere.

Immediate engineering and administration optimization

Our Doc Search system incorporates superior immediate engineering methods to reinforce search accuracy, effectivity, and maintainability. Let’s discover the important thing options of our immediate administration system and the worth they convey to the search expertise.

Two-stage immediate structure and modular immediate administration:

On the core of our system is a two-stage immediate structure. This design separates device choice from activity execution for extra environment friendly and correct question processing.

This structure reduces token utilization by as much as 60% by loading solely vital prompts per question processing stage. The light-weight preliminary stage rapidly routes queries to acceptable instruments, whereas specialised prompts deal with the precise execution with targeted context, bettering each efficiency and accuracy in device choice and question execution.

Our modular immediate administration system shops prompts in exterior configuration recordsdata for dynamic loading based mostly on context and supporting personalization. It helps immediate updates with out code deployments, slicing replace cycles from hours to minutes. This structure facilitates A/B testing of various immediate variations and fast rollbacks, enhancing system adaptability and reliability.

The system implements context-aware immediate choice, adapting to question sorts, doc traits, and search contexts. This method makes certain that probably the most acceptable immediate and question construction are used for every distinctive search situation. For instance, the system distinguishes between totally different query sorts (for instance, ‘list_question’) for tailor-made processing of assorted question intents.

Search algorithm optimization

Our doc search system implements search algorithms that mix vector-based semantic search with conventional text-based approaches to look throughout doc metadata and content material. We use totally different question methods optimized for particular search eventualities.

Key phrase search:

Key phrase search makes use of a twin technique combining each metadata and content material searches utilizing phrase matching. A set question template construction facilitates effectivity and consistency, incorporating predefined metadata, content material, permission guidelines, and constructing ID constraints, whereas dynamically integrating user-specific phrases and roles. This method permits for quick and dependable searches whereas sustaining correct entry controls and relevance.

Consumer queries like “lease settlement” or “property tax 2023” are parsed into element phrases, every requiring a match within the doc content material for relevancy, facilitating exact outcomes.

Equally, for metadata searches, the system makes use of phrase looking throughout metadata fields:

This method gives actual matching capabilities throughout doc metadata, facilitating exact outcomes when customers are trying to find particular doc properties. The system executes each search sorts concurrently and outcomes from each searches are then merged and deduplicated, with scoring normalized throughout each end result units.

Pure language question search:

Our NLQ search combines LLM-generated queries with vector-based semantic search by two predominant elements. The metadata search makes use of an LLM to generate OpenSearch queries from pure language enter. As an example, “Discover lease agreements mentioning early termination for tech corporations from final yr” is reworked right into a structured question that searches throughout doc sorts, dates, property names and different metadata fields.

For content material searches, we make use of KNN vector search with a Okay-factor of 5 to establish semantically comparable content material. The system converts queries into vector embeddings and executes each metadata and content material searches concurrently, combining outcomes whereas minimizing duplicates.

Chat with Doc (digital assistant for in-depth doc interplay):

The Chat with Doc characteristic helps pure dialog with particular paperwork after preliminary search. Customers can ask questions, request summaries, or search particular data from chosen paperwork by an easy interplay course of.

When engaged, the system retrieves the entire doc content material utilizing its node identifier and processes consumer queries by a streamlined pipeline. Every question is dealt with by an LLM utilizing rigorously constructed prompts that mix the consumer’s query with related doc context.

With this functionality customers can extract data from advanced paperwork effectively. For instance, property managers can rapidly perceive lease phrases or fee schedules with out manually scanning prolonged agreements. The characteristic gives immediate summaries and explanations for speedy data entry and decision-making in document-intensive workflows.

Scaling doc ingestion

To deal with high-throughput doc processing and large-scale enterprise ingestion, our ingestion pipeline makes use of asynchronous Amazon Textract for scalable, parallel textual content extraction. The structure effectively processes various file types-PDFs, PPTs, Phrase paperwork, Excel recordsdata and images-even with lots of of pages or high-resolution content material. As soon as a doc is uploaded to an Amazon S3 bucket, a message triggers an SQS queue, invoking a Lambda operate that initiates an asynchronous Textract job, offloading heavy extraction and OCR duties with out blocking execution.

For textual content paperwork, the system reads the file from Amazon S3 and submits it to Amazon Textract’s asynchronous API, which processes the doc within the background. As soon as the job completes, the outcomes are retrieved and parsed to extract structured textual content. This textual content is then chunked intelligently—based mostly on token depend or semantic boundaries—and handed by a Bedrock embedding mannequin (For instance, Amazon Titan Textual content embeddings v2). Every chunk is enriched with metadata and listed into Amazon OpenSearch for quick and context-aware search capabilities. As soon as ingested, our clever question technique, pushed by consumer and CBRE market lookups, dynamically directs searches to the related OpenSearch indexes.

Picture recordsdata observe an identical move however use Amazon Bedrock Claude 3 Haiku for OCR after base64 conversion. Extracted textual content is then chunked, embedded, and listed like customary textual content paperwork.

Safety and entry management

Consumer authentication and authorization happens by a multi-layered safety course of:

- Entry token validation: The system verifies the consumer’s id by validating the consumer id in Microsoft B2C and their entry token in opposition to every request. The consumer can also be checked for his or her authorization to entry utility.

- Entitlement verification: Concurrently, the system checks the consumer’s permissions in a Redis database to confirm they’ve the suitable entry rights to particular modules in utility and database schemas (entitlements) they’re licensed to question on.

- Property entry validation: The system additionally retrieves their licensed constructing record from Redis database (constructing id record to which the consumer is mapped), ensuring they’ll solely entry information associated to their properties inside their enterprise portfolio.

This parallel validation course of facilitates safer and acceptable entry whereas sustaining optimum efficiency by Redis’s high-speed information retrieval capabilities. Redis is populated through the utility load by mapping consumer entitlement and constructing mapping maintained within the database. If the consumer particulars are usually not present in Redis an API is invoked to replenish the Redis database.

Outcomes and influence

CBRE’s expertise with this initiative has led to enhanced operational effectivity and information reliability, immediately translating into tangible enterprise advantages:

- Value financial savings and useful resource optimization: By decreasing hours of handbook effort yearly per consumer, the enterprise can understand substantial value financial savings (for instance, in labor prices, lowered extra time, or reallocated personnel). This frees up useful consumer time in order that the group can give attention to extra strategic, high-value duties that drive constructing efficiency, innovation and progress moderately than repetitive handbook processes.

- Improved decision-making and threat mitigation: Delivering outcomes with 95% accuracy for enterprise selections which can be based mostly on extremely dependable information. This minimizes the chance of errors, resulting in extra knowledgeable methods, fewer expensive errors, and finally, higher enterprise outcomes.

- Elevated productiveness and throughput: With much less time spent on handbook duties and a better assurance of information high quality, workflows can turn into smoother and quicker. This interprets to elevated total productiveness and probably increased throughput for associated processes, enhancing service supply.

Classes discovered and greatest practices

The next are our classes discovered and greatest practices based mostly on our expertise constructing this answer:

- Use immediate modularization: Immediate engineering is crucial for optimizing utility efficiency and sustaining constant outcomes. Breaking prompts into modular elements helped in higher immediate administration, enhanced management and maintainability by streamlined model management, simplified testing and validation processes, and improved efficiency monitoring capabilities. The modular method to immediate design lowered token utilization, which in flip decreased LLM response occasions and improved total system efficiency. Module method additionally helps in enhanced SQL era effectivity by quicker troubleshooting, lowered implementation time, and extra dependable question era, leading to faster decision of edge instances and enterprise rule updates.

- Present correct few shot instance: For elevated accuracy and consistency of SQL era, use dynamic few shot instance with modular elements for seamless updates to instance repository.

- Embody examples protecting frequent use instances and edge eventualities.

- Preserve a various set of high-quality instance pairs protecting numerous enterprise eventualities.

- Hold examples concise and targeted on particular patterns.

- Recurrently replace examples based mostly on new enterprise necessities. Take away or replace outdated examples.

- Restrict to top-1 or top-2 most related examples to handle token utilization.

- Recurrently validate the relevance of chosen examples.

- Arrange suggestions loops to constantly enhance instance matching accuracy.

- Advantageous-tune similarity thresholds for optimum instance matching.

- Cut back the context window: For decreasing the context window dimension of the context handed, choose solely the top-N KNN fields from the schema definition together with key/obligatory fields. Solely apply the dynamic context discipline choice for schema the place excessive variety of fields are current and rising the context window dimension.

- Enhance relevancy: LLM Scoring mechanism helped us in getting the precise related set of schemas (modules). Harnessing LLM intelligence over the KNN results of related module helped us get probably the most related ordered outcomes. Additionally contemplate:

- Vector similarity alone could not seize true semantic relevance.

- High-Okay nearest neighbors don’t all the time assure contextual accuracy.

- Order of outcomes could not mirror precise relevance to the question.

- Use of LLM Scoring offered a extra correct schema relevancy willpower.

Conclusion

CBRE Property Administration and AWS collectively demonstrated how progressive cloud AI options can unlock actual enterprise worth at scale. Through the use of AWS companies and greatest practices, enterprises can reimagine how they entry, handle, and derive perception from their information and take actual motion.

To learn the way your group can speed up digital transformation with AWS, contact your AWS account group or begin exploring AWS AI and information analytics companies at present.

Additional studying on AWS companies featured on this answer:

In regards to the authors

Lokesha Thimmegowda is a Senior Principal Software program Engineer at CBRE, specializing in synthetic intelligence and AWS. With 4 AWS certifications, together with Options Architect Skilled and AWS AI Practitioner, he excels at guiding groups by advanced challenges with progressive options. Lokesha is enthusiastic about designing transformative answer architectures that drive effectivity. Outdoors of labor, he enjoys every day tennis along with his daughters and weekend cricket.

Lokesha Thimmegowda is a Senior Principal Software program Engineer at CBRE, specializing in synthetic intelligence and AWS. With 4 AWS certifications, together with Options Architect Skilled and AWS AI Practitioner, he excels at guiding groups by advanced challenges with progressive options. Lokesha is enthusiastic about designing transformative answer architectures that drive effectivity. Outdoors of labor, he enjoys every day tennis along with his daughters and weekend cricket.

Muppirala Venkata Krishna Kumar Principal Software program Engineer at CBRE with over 18 years of experience in main technical groups and designing end-to-end options throughout various domains. A strategic technical lead with a powerful command over each front-end and back-end applied sciences, cloud structure utilizing AWS, and AI/ML-driven improvements. Enthusiastic about staying on the forefront of know-how, constantly studying, and implementing trendy instruments to drive impactful outcomes. Outdoors of labor, values high quality time with household and enjoys religious journey experiences that deliver stability and inspiration.

Muppirala Venkata Krishna Kumar Principal Software program Engineer at CBRE with over 18 years of experience in main technical groups and designing end-to-end options throughout various domains. A strategic technical lead with a powerful command over each front-end and back-end applied sciences, cloud structure utilizing AWS, and AI/ML-driven improvements. Enthusiastic about staying on the forefront of know-how, constantly studying, and implementing trendy instruments to drive impactful outcomes. Outdoors of labor, values high quality time with household and enjoys religious journey experiences that deliver stability and inspiration.

Maraka Vishwadev is a Senior Workers Engineer at CBRE with 18 years of expertise in enterprise software program growth, specializing in backend–frontend applied sciences and AWS Cloud. He leads impactful initiatives in Generative AI, leveraging Giant Language Fashions to drive clever automation, improve consumer experiences, and unlock new enterprise capabilities. He’s deeply concerned in architecting and delivering scalable, safe, and cloud-native options, aligning know-how with enterprise technique. Vishwa balances his skilled life with cooking, motion pictures, and high quality household time.

Maraka Vishwadev is a Senior Workers Engineer at CBRE with 18 years of expertise in enterprise software program growth, specializing in backend–frontend applied sciences and AWS Cloud. He leads impactful initiatives in Generative AI, leveraging Giant Language Fashions to drive clever automation, improve consumer experiences, and unlock new enterprise capabilities. He’s deeply concerned in architecting and delivering scalable, safe, and cloud-native options, aligning know-how with enterprise technique. Vishwa balances his skilled life with cooking, motion pictures, and high quality household time.

Chanpreet Singh is a Senior Advisor at AWS with 18+ years of trade expertise, specializing in Information Analytics and AI/ML options. He companions with enterprise clients to architect and implement cutting-edge options in Huge Information, Machine Studying, and Generative AI utilizing AWS native companies, associate options and open-source applied sciences. A passionate technologist and downside solver, he balances his skilled life with nature exploration, studying, and high quality household time.

Chanpreet Singh is a Senior Advisor at AWS with 18+ years of trade expertise, specializing in Information Analytics and AI/ML options. He companions with enterprise clients to architect and implement cutting-edge options in Huge Information, Machine Studying, and Generative AI utilizing AWS native companies, associate options and open-source applied sciences. A passionate technologist and downside solver, he balances his skilled life with nature exploration, studying, and high quality household time.

Sachin Khanna is a Lead Advisor specializing in Synthetic Intelligence and Machine Studying (AI/ML) inside the AWS Skilled Companies group. With a powerful background in information administration, generative AI, giant language fashions, and machine studying, he brings in depth experience to tasks involving information, databases, and AI-driven options. His proficiency in cloud migration and value optimization has enabled him to information clients by profitable cloud adoption journeys, delivering tailor-made options and strategic insights.

Sachin Khanna is a Lead Advisor specializing in Synthetic Intelligence and Machine Studying (AI/ML) inside the AWS Skilled Companies group. With a powerful background in information administration, generative AI, giant language fashions, and machine studying, he brings in depth experience to tasks involving information, databases, and AI-driven options. His proficiency in cloud migration and value optimization has enabled him to information clients by profitable cloud adoption journeys, delivering tailor-made options and strategic insights.

Dwaragha Sivalingam is a Senior Options Architect specializing in generative AI at AWS, serving as a trusted advisor to clients on cloud transformation and AI technique. With seven AWS certifications together with ML Specialty, he has helped clients in lots of industries, together with insurance coverage, telecom, utilities, engineering, building, and actual property. A machine studying fanatic, he balances his skilled life with household time, having fun with highway journeys, motion pictures, and drone images.

Dwaragha Sivalingam is a Senior Options Architect specializing in generative AI at AWS, serving as a trusted advisor to clients on cloud transformation and AI technique. With seven AWS certifications together with ML Specialty, he has helped clients in lots of industries, together with insurance coverage, telecom, utilities, engineering, building, and actual property. A machine studying fanatic, he balances his skilled life with household time, having fun with highway journeys, motion pictures, and drone images.