After we launched Amazon SageMaker AI in 2017, we had a transparent mission: put machine studying within the fingers of any developer, regardless of their ability stage. We needed infrastructure engineers who have been “whole noobs in machine studying” to have the ability to obtain significant leads to per week. To take away the roadblocks that made ML accessible solely to a choose few with deep experience.

Eight years later, that mission has advanced. At this time’s ML builders aren’t simply coaching easy fashions—they’re constructing generative AI purposes that require huge compute, advanced infrastructure, and complex tooling. The issues have gotten more durable, however our mission stays the identical: get rid of the undifferentiated heavy lifting so builders can give attention to what issues most. Within the final 12 months, I’ve met with prospects who’re doing unimaginable work with generative AI—coaching huge fashions, fine-tuning for particular use instances, constructing purposes that may have appeared like science fiction only a few years in the past. However in these conversations, I hear about the identical frustrations. The workarounds. The unimaginable selections. The time misplaced to what ought to be solved issues. Just a few weeks in the past, we launched just a few capabilities that tackle these friction factors: securely enabling distant connections to SageMaker AI, complete observability for large-scale mannequin growth, deploying fashions in your current HyperPod compute, and coaching resilience for Kubernetes workloads. Let me stroll you thru them.

The workaround tax

Right here’s an issue I didn’t count on to nonetheless be coping with in 2025—builders having to decide on between their most well-liked growth setting and entry to highly effective compute.

I spoke with a buyer who described what they known as the “SSH workaround tax”—the time and complexity value of attempting to attach their native growth instruments to SageMaker AI compute. They’d constructed this elaborate system of SSH tunnels and port forwarding that labored, kind of, till it didn’t. After we moved from traditional to the most recent model of SageMaker Studio, their workaround broke completely. That they had to choose: abandon their rigorously personalized VS Code setups with all their extensions and workflows or lose entry to the compute they wanted for his or her ML workloads.

Builders shouldn’t have to decide on between their growth instruments and cloud compute. It’s like being pressured to decide on between having electrical energy and having working water in your home—each are important, and the selection itself is the issue.

The technical problem was attention-grabbing. SageMaker Studio areas are remoted managed environments with their very own safety mannequin and lifecycle. How do you securely tunnel IDE connections by way of AWS infrastructure with out exposing credentials or requiring prospects to turn out to be networking specialists? The answer wanted to work for several types of customers—some who needed one-click entry instantly from SageMaker Studio, others who most well-liked to begin their day of their native IDE and handle all their areas from there. We would have liked to enhance on the work that was finished for SageMaker SSH Helper.

So, we constructed a brand new StartSession API that creates safe connections particularly for SageMaker AI areas, establishing SSH-over-SSM tunnels by way of AWS Techniques Supervisor that keep all of SageMaker AI’s safety boundaries whereas offering seamless entry. For VS Code customers coming from Studio, the authentication context carries over robotically. For individuals who need their native IDE as the first entry level, directors can present native credentials that work by way of the AWS Toolkit VS Code plug-in. And most significantly, the system handles community interruptions gracefully and robotically reconnects, as a result of we all know builders hate shedding their work when connections drop.

This addressed the primary characteristic request for SageMaker AI, however as we dug deeper into what was slowing down ML groups, we found that the identical sample was taking part in out at an excellent bigger scale within the infrastructure that helps mannequin coaching itself.

The observability paradox

The second drawback is what I name the “observability paradox”. The very system designed to forestall issues turns into the supply of issues itself.

Once you’re working coaching, fine-tuning, or inference jobs throughout lots of or hundreds of GPUs, failures are inevitable. {Hardware} overheats. Community connections drop. Reminiscence will get corrupted. The query isn’t whether or not issues will happen—it’s whether or not you’ll detect them earlier than they cascade into catastrophic failures that waste days of pricey compute time.

To observe these huge clusters, groups deploy observability programs that gather metrics from each GPU, each community interface, each storage machine. However the monitoring system itself turns into a efficiency bottleneck. Self-managed collectors hit CPU limitations and might’t sustain with the dimensions. Monitoring brokers replenish disk area, inflicting the very coaching failures they’re meant to forestall.

I’ve seen groups working basis mannequin coaching on lots of of cases expertise cascading failures that might have been prevented. Just a few overheating GPUs begin thermal throttling, down the whole distributed coaching job. Community interfaces start dropping packets underneath elevated load. What ought to be a minor {hardware} challenge turns into a multi-day investigation throughout fragmented monitoring programs, whereas costly compute sits idle.

When one thing does go incorrect, knowledge scientists turn out to be detectives, piecing collectively clues throughout fragmented instruments—CloudWatch for containers, customized dashboards for GPUs, community displays for interconnects. Every device exhibits a bit of the puzzle, however correlating them manually takes days.

This was a type of conditions the place we noticed prospects doing work that had nothing to do with the precise enterprise issues they have been attempting to unravel. So we requested ourselves: how do you construct observability infrastructure that scales with huge AI workloads with out turning into the bottleneck it’s meant to forestall?

The resolution we constructed rethinks observability structure from the bottom up. As an alternative of single-threaded collectors struggling to course of metrics from hundreds of GPUs, we carried out auto-scaling collectors that develop and shrink with the workload. The system robotically correlates high-cardinality metrics generated inside HyperPod utilizing algorithms designed for large scale time sequence knowledge. It detects not simply binary failures, however what we name gray failures—partial, intermittent issues which might be laborious to detect however slowly degrade efficiency. Assume GPUs that robotically decelerate because of overheating, or community interfaces dropping packets underneath load. And also you get all of this out-of-the-box, in a single dashboard based mostly on our classes discovered coaching GPU clusters at scale—with no configuration required.

Groups that used to spend days detecting, investigating, and remediating process efficiency points now establish root causes in minutes. As an alternative of reactive troubleshooting after failures, they get proactive alerts when efficiency begins to degrade.

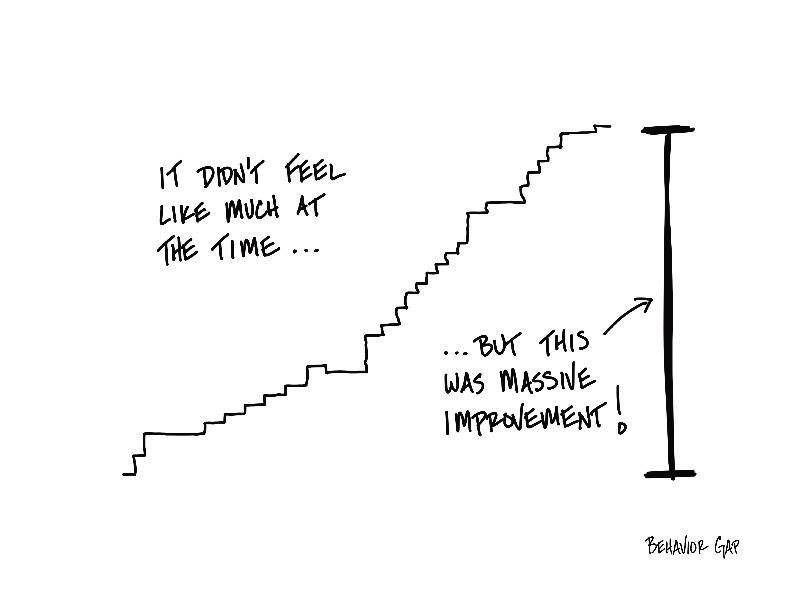

The compound impact

What strikes me about these issues is how they compound in ways in which aren’t instantly apparent. The SSH workaround tax doesn’t simply value time—it discourages the form of fast experimentation that results in breakthroughs. When organising your growth setting takes hours as a substitute of minutes, you’re much less more likely to attempt that new method or take a look at that totally different structure.

The observability paradox creates an analogous psychological barrier. When infrastructure issues take days to diagnose, groups turn out to be conservative. They persist with smaller, safer experiments reasonably than pushing the boundaries of what’s potential. They over-provision assets to keep away from failures as a substitute of optimizing for effectivity. The infrastructure friction turns into innovation friction.

However these aren’t the one friction factors we’ve been working to get rid of. In my expertise constructing distributed programs at scale, one of the crucial persistent challenges has been the factitious boundaries we create between totally different phases of the machine studying lifecycle—organizations sustaining separate infrastructure for coaching fashions and serving them in manufacturing, a sample that made sense when these workloads had basically totally different traits, however one which has turn out to be more and more inefficient as each have converged on comparable compute necessities. With SageMaker HyperPod’s new mannequin deployment capabilities, we’re eliminating this boundary completely, permitting you to coach your basis fashions on a cluster and instantly deploy them on the identical infrastructure, maximizing useful resource utilization whereas decreasing the operational complexity that comes from managing a number of environments.

For groups utilizing Kubernetes, we’ve added a HyperPod coaching operator that brings important enhancements to fault restoration. When failures happen, it restarts solely the affected assets reasonably than the whole job. The operator additionally displays for widespread coaching points akin to stalled batches and non-numeric loss values. Groups can outline customized restoration insurance policies by way of simple YAML configurations. These capabilities dramatically cut back each useful resource waste and operational overhead.

These updates—securely enabling distant connections, autoscaling observability collectors, seamlessly deploying fashions from coaching environments, and bettering fault restoration—work collectively to deal with the friction factors that forestall builders from specializing in what issues most: constructing higher AI purposes. Once you take away these friction factors, you don’t simply make current workflows sooner; you allow completely new methods of working.

This continues the evolution of our authentic SageMaker AI imaginative and prescient. Every step ahead will get us nearer to the purpose of placing machine studying within the fingers of any developer, with as little undifferentiated heavy lifting as potential.

Now, go construct!